AI tool unifies fragmented cell maps into spatial atlases across tissues

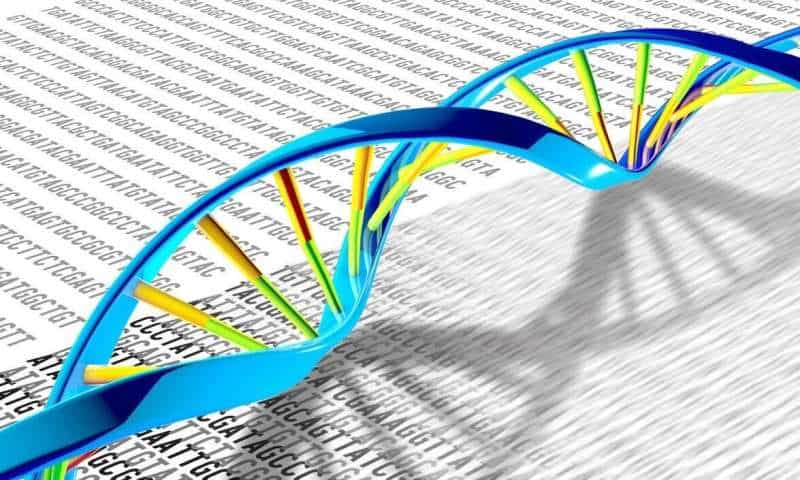

A new computational method could dramatically accelerate efforts to map the body’s cells in space, according to a study published in Nature Genetics. Spatial multi-omics technologies—often described as ultra-high-resolution maps of tissues—allow scientists to see not only which genes or proteins are active in a cell, but exactly where that activity occurs. That spatial context is critical for understanding complex organs such as the brain, immune tissues and developing embryos.

Unfortunately, capturing multiple molecular layers at once remains expensive and technically challenging, said David Gate, Ph.D., assistant professor in the Ken and Ruth Davee Department of Neurology’s Division of Behavioral Neurology, who was a co-author of the study.

“In practice, investigators end up with ‘mosaic’ datasets: different slices or batches that each capture only some of the layers, often from different technologies or labs, with batch effects and missing pieces,” said Gate, who also leads the Abrams Research Center on Neurogenomics.

A new tool to unify data

The new computational method, dubbed SpaMosaic, was designed to solve this growing problem. Developed by a collaborative team led by computational investigators, the tool uses artificial intelligence to align and integrate spatial datasets.

To create the new tool, investigators combined contrastive learning—which helps AI models learn meaningful similarities and differences across datasets—with graph neural networks that account for spatial relationships between neighboring cells. The result is a shared dataset that allows RNA, protein, chromatin accessibility and histone modification data to be analyzed together, even when individual datasets measure only a subset of these features.

Performance across tissues and species

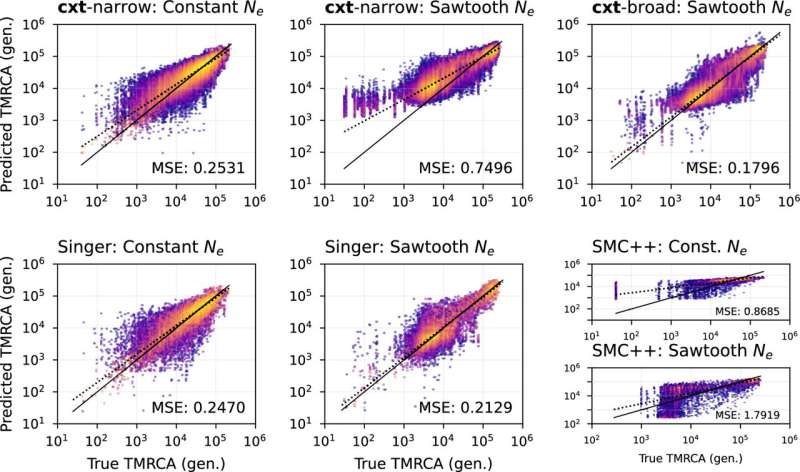

In benchmarking experiments, SpaMosaic consistently outperformed existing integration methods on both simulated data and real-world datasets spanning mouse brain development, mouse embryos and human immune tissues such as lymph node and tonsil. The investigators found that the tool excelled at identifying biologically meaningful spatial domains—regions of tissue with shared functional identity—even when datasets came from different technologies or developmental stages.

“SpaMosaic is also effective at removing technical ‘batch effects’ (like differences in how samples were processed) while keeping the real biology intact,” Gate said.

Predicting missing molecular layers

One of SpaMosaic’s most novel capabilities is its ability to predict molecular layers that were never directly measured. In a large mosaic dataset of the mouse brain, the tool inferred histone modification patterns in regions where only transcriptomic data were available.

“SpaMosaic filled in the gaps and actually revealed stronger links between gene activity and epigenetic regulation than the directly measured chromatin data sometimes did,” Gate said.

Implications for atlases and neuroscience

The findings suggest the method can uncover regulatory relationships between molecular layers, offering an alternative to costly, technically demanding experiments. Instead of being limited by what a single experiment can measure, investigators can now combine data across studies, platforms and labs, Gate said.

“This is a real game-changer for building true multi-omics ‘atlases’ of tissues,” he said. “For neuroscience (our focus), this means better maps of brain development, neuroinflammation, and eventually disease states like Alzheimer’s or ALS, where spatial relationships and multi-layer regulation are critical. It accelerates discovery without requiring every lab to re-do perfect multi-modal experiments on every sample.”

Next steps for SpaMosaic development

The team is already exploring next steps, including scaling SpaMosaic to even larger datasets. Additionally, Gate and his collaborators will further test the method to assess how reliable the predicted data are, he said.

“This project is a great example of what happens when computational innovators and experimental biologists work closely together,” Gate said. “Tools like SpaMosaic are going to democratize spatial multi-omics, letting more labs contribute to and benefit from large-scale tissue atlases.”