Natural competition between brain circuits may boost information processing

Over the past decades, neuroscience studies have painted an increasingly detailed picture of the human brain, its organization and how it supports various functions. To plan and execute desired behaviors in changing circumstances, networks of neurons in the brain can either work together or suppress each other, thus employing both cooperative and competitive interaction strategies.

Researchers at University of Oxford, University of Cambridge, McGill University, University of Aarhus and Pompeu Fabra University recently set out to better understand the mammalian brain’s underlying dynamics, specifically how its underlying architecture balances cooperative and competitive interactions between neural circuits. Their paper, published in Nature Neuroscience, offers new insight that could both improve the understanding of the brain and inform the development of brain-inspired computational models.

“Building models of the brain is an important part of modern neuroscience,” Andrea Luppi, first author of the paper, told Medical Xpress. “As Nobel winner Reichard Feynman said, ‘what I cannot create, I do not understand.’ Most current models, however, share a limitation. Everyday experience, from focusing attention or switching between tasks, also reveals that brain systems must compete for limited resources.

“Our brains cannot do everything at once, and not all regions can be active together all the time. Yet most macroscale brain simulations run in the past 20 years have not taken competitive interactions into account, instead forcing regions to cooperate.”

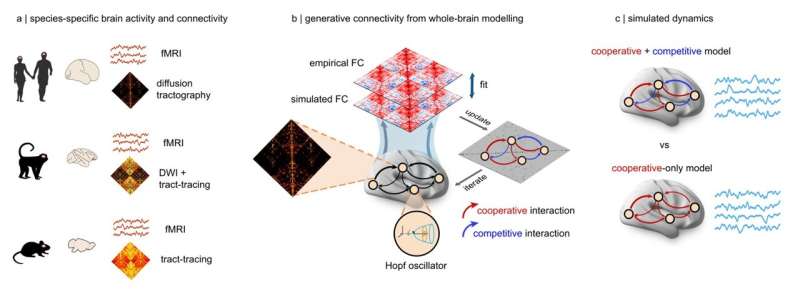

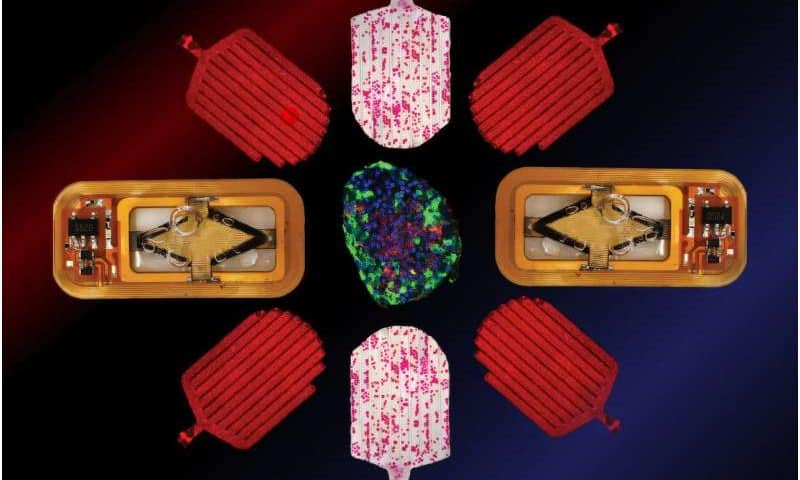

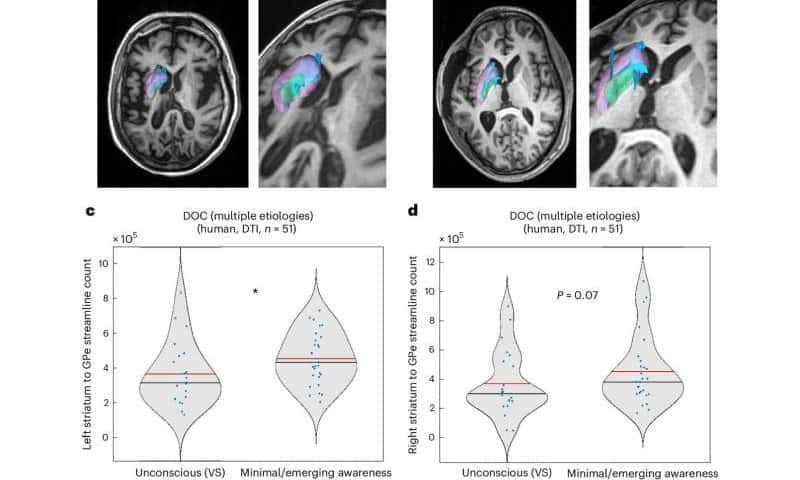

Superior computational performance of connectome-based neuromorphic networks with competitive generative interactions. Credit: Nature Neuroscience (2026). DOI: 10.1038/s41593-026-02205-3

In earlier computer simulations of the human brain, the activation of one brain region prompted downstream neighboring regions to also increase their activity. This often resulted in an excess of cooperation between connected regions, which led to the emergence of overly synchronized states that are rarely observed in the human brain or in the brains of other mammals.

“We had a simple question: should we include competition in our models and would this lead to better models?” said Luppi.

Modeling the brains of mice, macaques and humans

Luppi and his colleagues built computational models that simulated activity across the entire mammalian cortex. These models were created using available imaging data showing brain connections in the brains of humans, macaques and mice.

“We compared two types of brain models: the traditional one in which all interactions between brain regions were cooperative, and another in which regions could either excite or suppress each other’s activity (essentially competing for who is active),” explained Luppi.

“Across all three species, the models that included competitive interactions consistently outperformed cooperative-only models, being more realistic. Competitive interactions act as a stabilizing force, preventing runaway activity and allowing different brain systems to take turns in shaping the direction of the brain’s ebbs and flows.”

The researchers showed that computational models of the brain performed better when they included both cooperative and competitive interactions. This finding was consistent with models based on the wiring of the human, macaque and mouse brains.

“Crucially, computer models that included competitive interactions were not only more accurate overall compared with cooperative-only models, but also more individual-specific, better capturing the unique ‘brain fingerprint’ that distinguishes one person’s brain from another’s,” said Luppi.

“This is important because digital twins (i.e., virtual replicas of the brains or other organs of specific individuals) are increasingly proposed as tools for testing treatments virtually, before applying them to real people. If these models fail to capture the fundamental principles of each patient’s unique brain organization, their predictions won’t be personalized, and, in the worst case, even misleading.”

Implications for neuroscience research and brain-inspired computing

The results of this recent study highlight the importance of including both competitive and cooperative interactions between neural circuits in computer simulations of the mammalian brain or when developing brain-inspired computational models. In the future, they could inform the creation of better performing or more human-like artificial intelligence (AI) systems, as well as more accurate digital twins.

“Using the competitive model to predict the effects of different treatments in patients will be one of the most exciting next steps towards development of better models for personalized medicine,” added Luppi. “Looking ahead, the general principles of brain organization across species offer a path forward for understanding the principles of intelligent architectures and using them to shape the next generation of artificial intelligence.”